It’s typically not every day that Americans hear about “influence operations,” though recent history has been an exception to that rule as news outlets and government officials have aggressively hyped the alleged threat of Russian covert ops aimed at swaying the U.S. election, or at least at causing chaos leading up to it.

Indeed, much has been written not only of the Kremlin’s role in hacking the Democratic National Committee, but about Russia’s “troll army” and its activities spreading propaganda and disinformation on social media and website comment sections. Less has been said in major U.S. media outlets about parallel American efforts, however, though they are certainly ongoing, and not just against Russia. A report published earlier this year, for example, but only recently plucked from obscurity by the website PublicIntelligence.net, lays out in detail some of the kinds of sophisticated online influence operations the U.S. is waging against the Islamic State extremist organization.

A 2009 RAND Corporation study prepared for the Army defines influence operations as “the coordinated, integrated, and synchronized application of national diplomatic, informational, military, economic, and other capabilities in peacetime, crisis, conflict, and postconflict to foster attitudes, behaviors, or decisions by foreign target audiences that further U.S. interests and objectives.”

This same study also notes that there is a distinct public aversion to what are euphemistically called influence operations, which “accounts for the shifting definitions that have been given to a consistent set of activities over the second half of the 20th century, with the idea seemingly being that changing the names makes these activities more palatable to the American people.”

“In classical terms,” it continues, “strategic influence activities are broadly considered to be synonymous with public diplomacy or propaganda. The term ‘propaganda,’ however, has become quite tainted in the United States and other Western societies insofar as its popular usage assumes that propaganda must consist entirely of falsehoods, and it is rarely used by U.S. government officials to describe their activities.”

As made clear by both the 2009 study and the more recent report — which was a multi-agency effort involving the Joint Chiefs of Staff (JCS), U.S. Army Special Operations Command (USASOC), the Department of Homeland Security (DHS), and a private company called NSI, among others — modern “influence operations” are little more than psychological operations (PSYOP, in military and spy jargon) by a different name, or what was once commonly known as propaganda and psychological warfare.

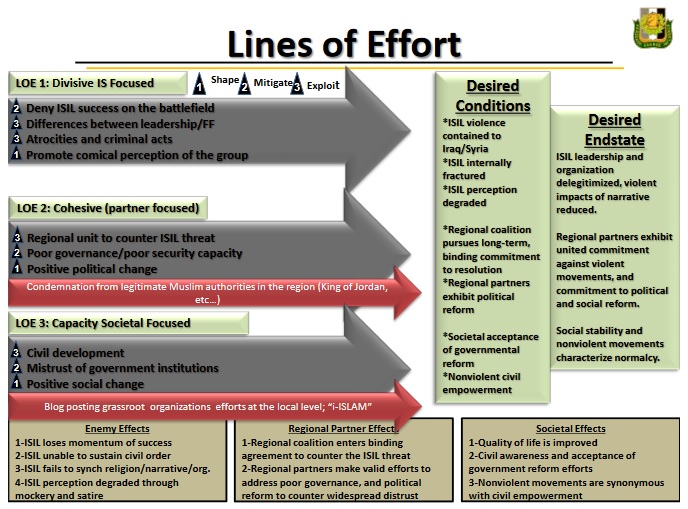

The new report, titled “Counter-Da’esh Influence Operations: Cognitive Space Narrative Simulation Insights,” describes an event that took place in April, following a similar operation last December, in which nearly 100 PSYOP professionals participated in what amounted to a simulated online trolling exercise targeting ISIS messaging and at persuading populations susceptible to such messaging.

PSYOP agents appear to have created intricate “personas” of credible anti-ISIS spokespersons (i.e. non-Westerners, to begin with), while other operators or what perhaps would be more properly termed intelligence “assets” played the role of various members of target audiences such as “violent Salafists,” “passive Salafists,” “Sunni tribalists,” and so on. The various targets were meant to simulate target audiences in North Africa, as well as in Nineveh (or Ninewa) and Anbar Province in Iraq.

The language used in describing the exercise is strikingly similar to that used by Cass Sunstein, a Harvard Law School professor and recent appointee to a new Pentagon “innovation advisory board,” in a 2008 essay which advocated the “cognitive infiltration of extremist groups,” although at the time the “extremists” Sunstein was referring to were 9/11 “truther” conspiracy theorists rather than ISIS jihadists. The recent counter-Da’esh PSYOP document, in comparison, refers to “cognitive arenas” and the “cognitive battlespace.”

The document openly discusses experimentation to test messaging aimed at fomenting guerrilla warfare and the establishment of an underground support network for the envisioned insurgency, which “funnels funds to the resistance movement’s shadow government.” Exceedingly careful messaging is a major focus throughout the report.

“Words have true power only when linked together effectively into a narrative. Narratives create a story and framework that the human mind can understand in order to break down cognitive barriers,” the document states.

“Narratives are transformative and have power, often using existing narratives to challenge dominant paradigms. It is essential to begin reframing and changing stories in the dominant culture to create more political possibility for social movements.” Indeed, the special operators behind the elaborate PSYOP drill come across as somewhat fixated on the possibility of manufacturing social movements in the report, which even includes a photo in one section featuring the raised fist symbol used by a wide range of protest movements.

The focus on messaging, in fact, extends well beyond written or spoken narratives, giving considerable attention to scientific evaluation of what makes for effective imagery. In planning out what amount to social media “memes” to deploy against potential ISIS sympathizers, the report’s authors describe going to great lengths and using state-of-the-art technology to determine which images and parts of images are most useful for catching a viewer’s attention.

Overall, the “Counter-Da’esh Influence Operations” report is an illuminating look into the world of contemporary psychological operations. It has been known for some time that British intelligence, which collaborates very closely with the U.S., has been involved in these sorts of operations — indeed, that they even utilize such psychological manipulations domestically against U.K. nationals. It has also previously been public knowledge that algorithms aimed at predicting terrorist attacks and censoring jihadist social media users (or, to get technical, “terrorist content”) have been in development.

And while it has been known for years that the FBI, for example, often catches would-be jihadists by luring them into taking illegal action through the use of deceptive online communications and personas, the extent of involvement by professional psychological operators, even against foreign targets, is interesting to see.

While the “Counter-Da’esh Influence Operations” report may not be classified material, and though it has been quietly available online for several months without receiving much attention, it is nonetheless revealing. U.S. officialdom has in recent weeks and months seemingly railed incessantly against the insidiousness of enemy propaganda — whether it is ISIS, the Russians, the Chinese, or whoever else. It could not be clearer, though, that our own government is just as deeply if not even more involved in such intrigue.

Help keep independent journalism alive.

Donate today to support MirrorWilderness.com.

$1.00

One thought on “Anti-ISIS influence ops drill reveals parts of U.S. deception strategy”