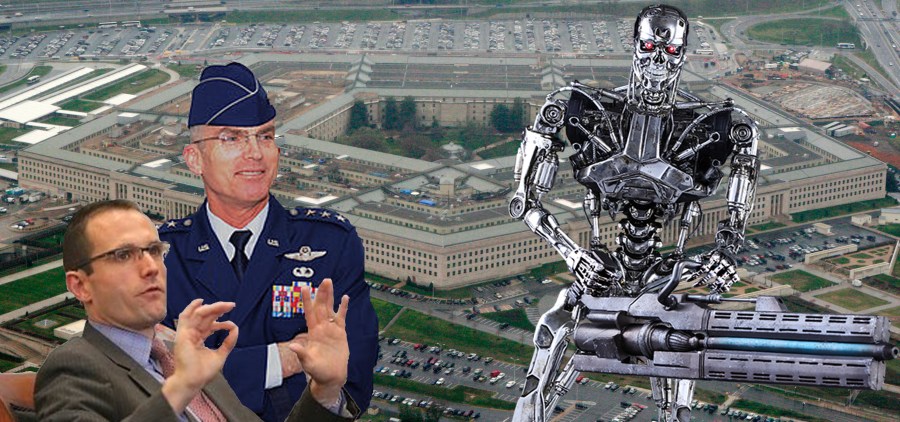

Last week at an Air Force Association event, William Roper, head of the Pentagon’s Strategic Capabilities Office, shared some of his thoughts on artificial intelligence, and the role he envisions for it in the next major war.

Except that the outbreak of this hypothetical future conflict “shouldn’t look like war at all,” as Defense One technology editor Patrick Tucker paraphrases Roper’s comments. “Instead, imagine a sort of digital collection blitzkrieg, with data-gathering software and sensors setting of (sic) alarms left and right as they vacuum up info for a massive AI. Whoever collects the most data on Day One just might win the war before a single shot is fired,” Tucker writes.

Tucker describes Roper as “the military’s foremost go-to guy to figure out how to use advances in technology to secure military advantage and how the enemy might do the same.” He also compares him to “the title character in 1964’s Stanley Kubrick film Dr. Strangelove.”

Roper, for his part, drew his own comparison in his discussion last week — between data and oil.

The military, Roper says, is “still focusing on data in a 1990s way: something you use to go into a fight and win and after that, the data, its raison d’être is over,” in contrast with Silicon Valley, where data is “wealth and fuel. Your data keeps working for you. You stockpile the most data that you can and train that to teach and train autonomous systems.” Roper apparently sees much promise in such systems.

“Almost nowhere do I see a technology that’s current that offers as much as autonomy,” he reportedly said. “We’re working very hard to produce a learning system.”

Nevertheless “Roper, like every Defense Department leader (Defense One) talked to about autonomy and weapons, was careful to emphasize that the Pentagon is not looking to replace human decision-making” with fully autonomous killer AI, Tucker writes.

Similarly, Mark Pomerleau of C4ISRnet writes that “for all intents and purposes, the military is not interested in what the vice chairman of the Joint Chiefs of Staff calls general AI.”

(“Narrow AI is teaching a machine to perform a specific task, while general AI is the T-1000, [Vice Chairman] Gen. Paul Selva said April 3 at an event hosted by Georgetown University, referencing the shape-shifting robot assassin from the ‘Terminator 2’ movie,” Pomerleau clarified.)

“I don’t think we need to go there,” Selva said of general AI, which would, in other words, be a machine capable of thinking like a human in every meaningful way, but also much more capable in other ways that machines can already surpass humans. “I think what we can do is apply narrow AI to empower humans to make decisions in an every (sic) increasingly complex battlespace,” Selva reportedly said.

And yet despite his own apparent fears about what AI might end up doing, Selva also seemed to frame the development of general AI for military purposes as a legitimate “issue” — one which, a slightly more hawkish strategist might argue, requires the United States to develop autonomous killer robots as a preemptive move, before its military rivals (real or imagined) do so first.

“The issue is whether or not a person or a country or an adversary would take narrow AI and build it into a system that allows the weapon to take a given life without the intervention of a human,” Selva reportedly said.

Even as Selva worries about “an adversary” developing artificial intelligence into a truly dystopian nightmare technology, though, it is the Silicon Valley companies like Google that the SCO’s Roper so admires which are pushing the hardest to develop “general AI.” High-profile Silicon Valley billionaires Mark Zuckerberg and Elon Musk, meanwhile, have also recently been pushing for the development of “brain-computer interface” technology, which would apparently use some sort of yet-to-be-determined brain-chipping or wiring process to meld human and machine intelligence — and to create, presumably, some variety of “general AI” in the process.

Selva’s comments this week are not his first comparing “general AI” to the robots from the Terminator movies. In fact the potential danger posed by general AI has its own name at the Pentagon: “the Terminator conundrum.”

Yet such concerns from top military technology strategists, who are privy to top-secret research, seem to nonetheless mean little to Silicon Valley tech honchos like Eric Schmidt, chairman of Google’s parent company, Alphabet, who would like us all to “stop freaking out” about AI. (Despite Schmidt’s role as chairman of the Pentagon’s “Defense Innovation Advisory Board,” Google as a company does not seem to value the military or the experience and input of its current and former members to nearly the same extent that the military’s leadership appears to admire Silicon Valley, as evidenced by Google’s recently reported treatment of some of its ex-military employees).

Based on what they’re saying publicly, everyone from military planners to tech executives can see hypothetical dangers to developing and then deploying artificial intelligence, but claim that such doomsday scenarios are unrealistic. Yet many of both the public and private sector participants in the AI debate also appear to have major blind spots.

While Gen. Selva’s comments seemed to leave the door open to more aggressive strategists to potentially argue for creating “lethal autonomous weapons systems” to preempt another nation’s doing so, Pomerleau reports that Selva “added that he has been an advocate for some kind of convention on how states use AI, but offered skepticism regarding how it could be enforced.”

This may be in part simply a public relations-oriented response to progress made at the United Nations late last year towards an international ban on killer robots. Nevertheless it is a step in the right direction, and any private sector AI researchers who have not already endorsed such a ban should follow suit.

Help keep independent journalism alive.

Donate today to support MirrorWilderness.com.

$1.00