YouTube, the widely-used video uploading site owned by Google, which is in turn owned by Alphabet, whose former chairman and current board member Eric Schmidt is a key adviser to the Pentagon, has announced that — in a Cass Sunsteinesque effort to combat the perceived threat posed by “conspiracy theories” — it will begin showing information alongside videos “in the coming months” from the notoriously unreliable web encyclopedia (as the site itself admits) that “anyone can edit,” Wikipedia.

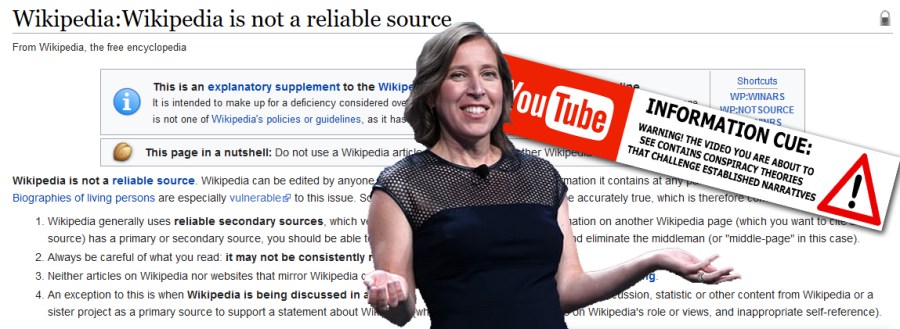

“We will show a companion unit of information from Wikipedia showing that here is information about the event,” in the cases of videos about controversial current or historical events that some users of the site suspect of involving a conspiracy or official coverup, the company’s CEO, Susan Wojcicki, reportedly said at the annual South by Southwest festival in Austin, Texas this week.

“We’re always exploring new ways to battle misinformation on YouTube,” a YouTube spokeswoman also reportedly said, adding that other third-party sources besides Wikipedia will also be used in the “companion unit.”

“It is not clear how the Wikipedia unit will help during these kinds of breaking news events, since it will depend on YouTube being on top of new conspiracy theories emerging on the platform – something it has not done effectively so far – and on the user-generated Wikipedia article being accurate as the news unfolds,” according to the Guardian. It is also unclear how YouTube, or who at YouTube, will determine which “conspiracy theories” require the “companion unit” treatment (video bloggers speculating about where the “Russiagate” investigation might lead, for example?), whether it will provide similar accompanying information from sources it deems reputable in cases of conspiracies that have been proven true, or what it even means, exactly, by “conspiracy theories.”

The day after reports of YouTube’s plan emerged, meanwhile, Wikipedia said it had not been informed beforehand. “We were not given advance notice of this announcement,” the Wikimedia Foundation stated on Twitter.

“We are always happy to see people, companies, and organizations recognize Wikipedia’s value as a repository of free knowledge. In this case, neither Wikipedia nor the Wikimedia Foundation are part of a formal partnership with YouTube,” it also noted.

Critics were quick to question YouTube’s approach.

“There is a substantial danger here that YouTube is treating wikipedia like a commons only in the sense of ‘tragedy of the commons’ – ie. It is saving money itself by shunting the work onto a common good, potentially killing that good in the process,” technologist and writer Tom Coates said on Twitter.

Jamie Cohen, an assistant professor at Molloy College in Rockville Centre, New York, meanwhile, similarly told USA Today that YouTube is essentially shifting responsibility from itself to Wikipedia’s volunteer editors.

“If they want a true, authority for validation, they should go to the source of the information,” Cohen reportedly said.

“He suggested a trusted news organization would make more sense,” USA Today added.

Whether it makes sense or is at all desirable from an average YouTube user’s standpoint to append user-uploaded videos with these disclaimers or “information cues” from mainstream media outlets that already have their own very prominent platforms (namely, the, um… well… mainstream media) is a question worthy of further debate. If people don’t want to see videos made by random internet users that may be entirely devoid of worthwhile information, or may provide a unique perspective that’s been omitted from mainstream coverage, perhaps they should watch the evening news instead.

The fact that YouTube — one of the primary outlets for many years now for the otherwise voiceless (i.e., anyone with an internet connection, rather than anyone with an Ivy League education and a job at the New York Times) to produce their own media content and get their voice heard — now wishes to play a greater role as gatekeeper of that content is disturbing, and raises questions as to why the company feels it must assert closer corporate control over narratives that would otherwise spread and develop (or alternatively be debunked and ridiculed) through an essentially organic process of public reaction to information.

Help keep independent journalism alive.

Donate today to support MirrorWilderness.com.

$1.00